- Home

- Services

- About

- News

- Contact

- Learning how to use microsoft office

- Independence day resurgence watch online english sub

- Bajirao mastani hindi movie download

- How to create a itunes music files on mac

- 2017 best of the best cameron missouri

- Dragon medical practice edition 2 upgrade

- R kelly trapped in the closet 23 33 youtube

- Sharepoint onedrive download

- Fi-5110c image scanner drivers for windows 10

- Java mac os x 10-6

- SHAREPOINT ONEDRIVE DOWNLOAD HOW TO

- SHAREPOINT ONEDRIVE DOWNLOAD CODE

- SHAREPOINT ONEDRIVE DOWNLOAD WINDOWS

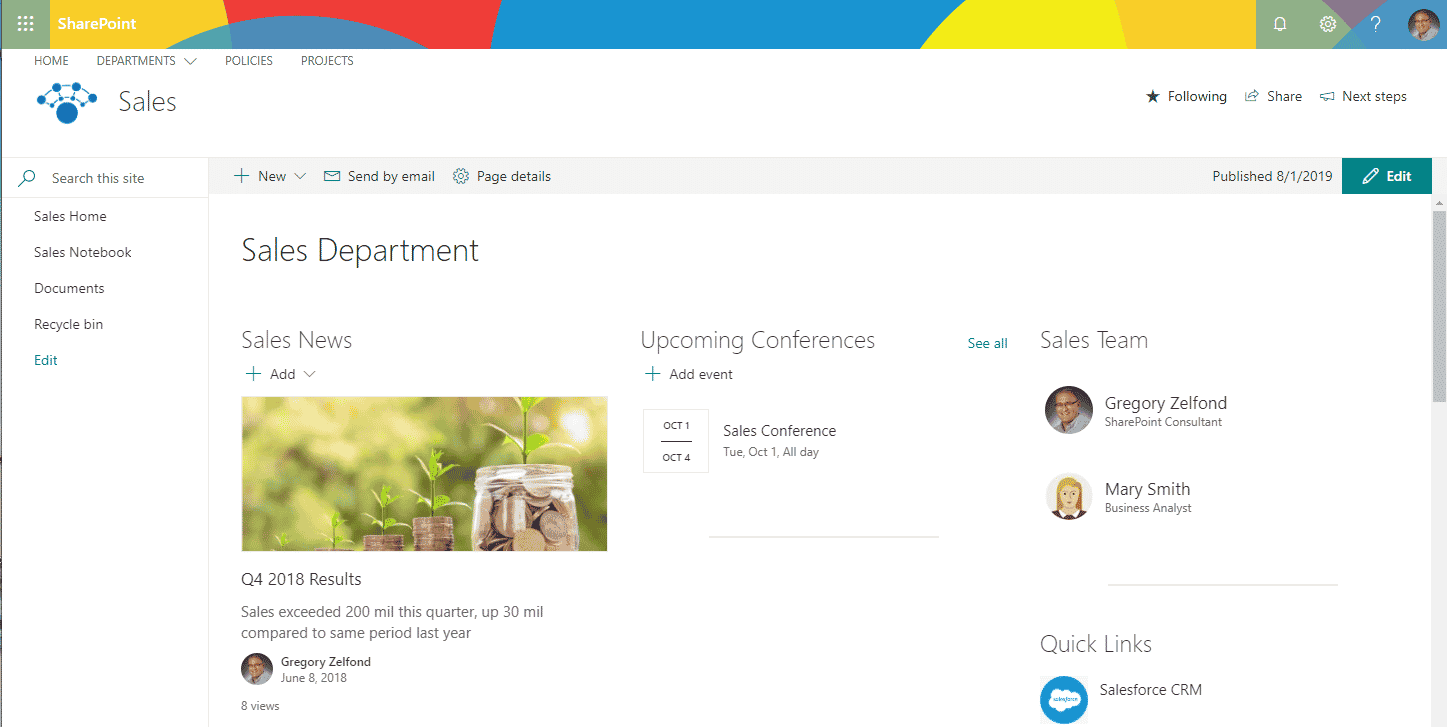

I have tested the above code and it has worked perfectly well for the download of files up to 9.7GB.SharePoint and OneDrive are Microsoft’s file management platforms. SW|0x010100623C9C49E42A00419C619EE6EAF8D8C1įinally, this post , from Steve Curran, has really helped me in clearing my doubts regarding RPC.

SR|File Restoration on Thursday, June 2, Jun 2016 12:42:39 Singh Message=successfully retrieved document 'Doc lib/90 MB.docx' from 'Doc lib/90 MB.docx'ĭisplay_urn:schemas-microsoft-com:office:office#Editor As you will see, it just contains the file meta info.

So, in order to get the actual file, we need to remove this html from the response. Which is exactly why, I am getting the index of ‘ ‘ and respectively setting the value of startPos ( starting position for file writing).įollowing is the sample of the html sliced out from the download of a file, 90 MB.docx. RPC not only returns the actual file but it also prefix the file content with html. In case you’re not familiar with this format.

Method, serviceName, documentName, oldThemeHtml, force, getOption, timeOut, expandWebPartPages) String rpcCallString = String.Format("method=", String docVersion = String.Empty //directly passed as empty ServiceName = Utility.GetEncodedString() ĭocumentName = documentName.Substring(1) ĭocumentName = Utility.GetEncodedString(documentName) String method = Utility.GetEncodedString("get document:15.") String requestUrl = ctx.Url + "/_vti_bin/_vti_aut/author.dll" String authCookieValue = spCredentials.GetAuthenticationCookie(targetSite) SharePointOnlineCredentials spCredentials = (SharePointOnlineCredentials)ctx.Credentials Here’s the complete code for downloading a large file from SharePoint Online. So, whenever it’s internal buffer cannot fill the data, it simply doubles up its size and the error gets thrown a lot earlier than the OpenBinaryDirect approach.Īfter a lot of digging, I figured out that the only way I could download such a huge file is by using the Remote Procedure Call (RPC). I could see in the Visual Studio Diagnostic Tools window that, the former approach was failing after the download of around 1.5GB of data however, the OpenBinaryStream download was failing after the download of 800-900MB of data only! This is because, MemoryStream uses a byte internally.

SHAREPOINT ONEDRIVE DOWNLOAD WINDOWS

However, for a 64-bit managed application on a 64-bit Windows operating system, you can create an object of no more than 2GB.įile oFile = web.GetFileByServerRelativeUrl(strServerRelativeURL) ĬlientResult stream = oFile.OpenBinaryStream() Įrror:: Invalid MIME content-length header encountered on read. In this approach, I was trying to download a file, say of size 10GB, in a single. I initially tried the following ways with no success.įileInformation fileInformation = File.OpenBinaryDirect(clientContext, serverRelativeUrl) But, just like I had to rewrite the upload code to accommodate this new limit, file download also needed a complete makeover. I mistakenly, assumed that the previous code for file download will work for the latest larger files as well. The upload operation, as we know, is actually incomplete without download. This is as per the new revised upload limit.

SHAREPOINT ONEDRIVE DOWNLOAD HOW TO

In my previous post, I had explained how to upload large files up to 10 GB to SharePoint Online.

- Home

- Services

- About

- News

- Contact

- Learning how to use microsoft office

- Independence day resurgence watch online english sub

- Bajirao mastani hindi movie download

- How to create a itunes music files on mac

- 2017 best of the best cameron missouri

- Dragon medical practice edition 2 upgrade

- R kelly trapped in the closet 23 33 youtube

- Sharepoint onedrive download

- Fi-5110c image scanner drivers for windows 10

- Java mac os x 10-6